Google SafeSearch is a feature that helps filter explicit content from appearing in search results. It is designed to filter out pages with images or videos that contain nudity, as well as pages with links, pop-ups, or ads that contain explicit content. SafeSearch relies on automated systems and machine learning to identify explicit content, including words on the hosting web page and in links.

By default, SafeSearch is turned on automatically for users under 18. Users have to logged in to turn it off if they want to. In a Google’s blog post entitled “Creating a safer internet for everyone”, the giant Internet company introduced a new setting to SafeSearch that automatically blurs explicit images in search results for the majority of users.

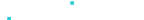

This new setting will blur explicit imagery if it appears in search results when SafeSearch filtering isn’t turned on. This setting will be the new default for people who don’t already have the SafeSearch filter turned on, with the option to adjust settings at any time.

As you can see from the example above, if someone were to search for “Dismounted Complex Blast Injury (DCBI)”, Google will automatically blur the image and warn the users that the image “may contain explicit content”.

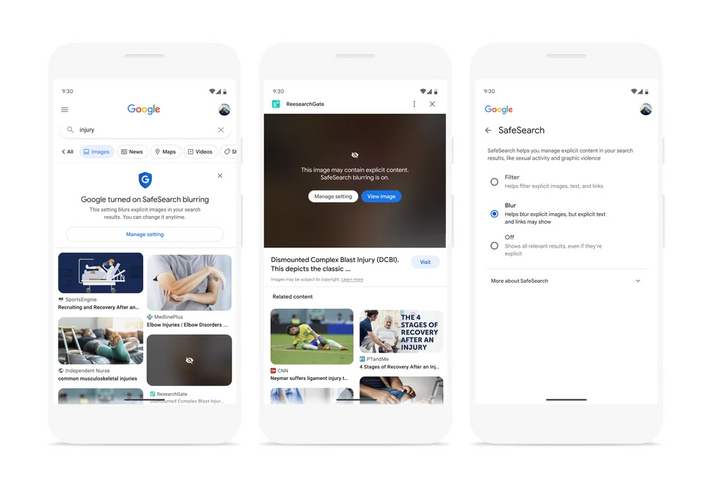

Users then have the choice to turn on SafeSearch or continue to view the image. If the users click ‘Manage setting’, users can choose between Filter (no explicit images will show up), Blur (Google will blur the explicit images and ask if you want to see it), and Off (users will see all the explicit images with no blurring).

By doing this, Google hopes they can prevent those under 18 from viewing and accessing explicit content. But we doubt it’s going to be an effective deterrent.